Hi Data Crunchers,

Continuous integration and automation are investment that enable a major scaling in the resource and quality of software projects. This past year saw a lot of improvements with an integrated commit flow and adding series of checks like linting of JavaScript, also running Python 3 tests automatically…

This also create a virtuous circle that encourages developers to add more tests on their own (e.g. +200 since the beginning of this year), as all the plumbing is already done for them.

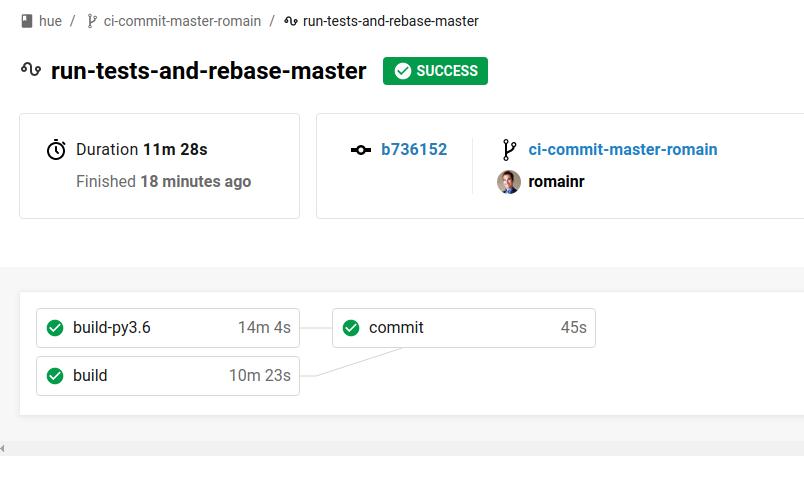

CI workflow

CI workflow

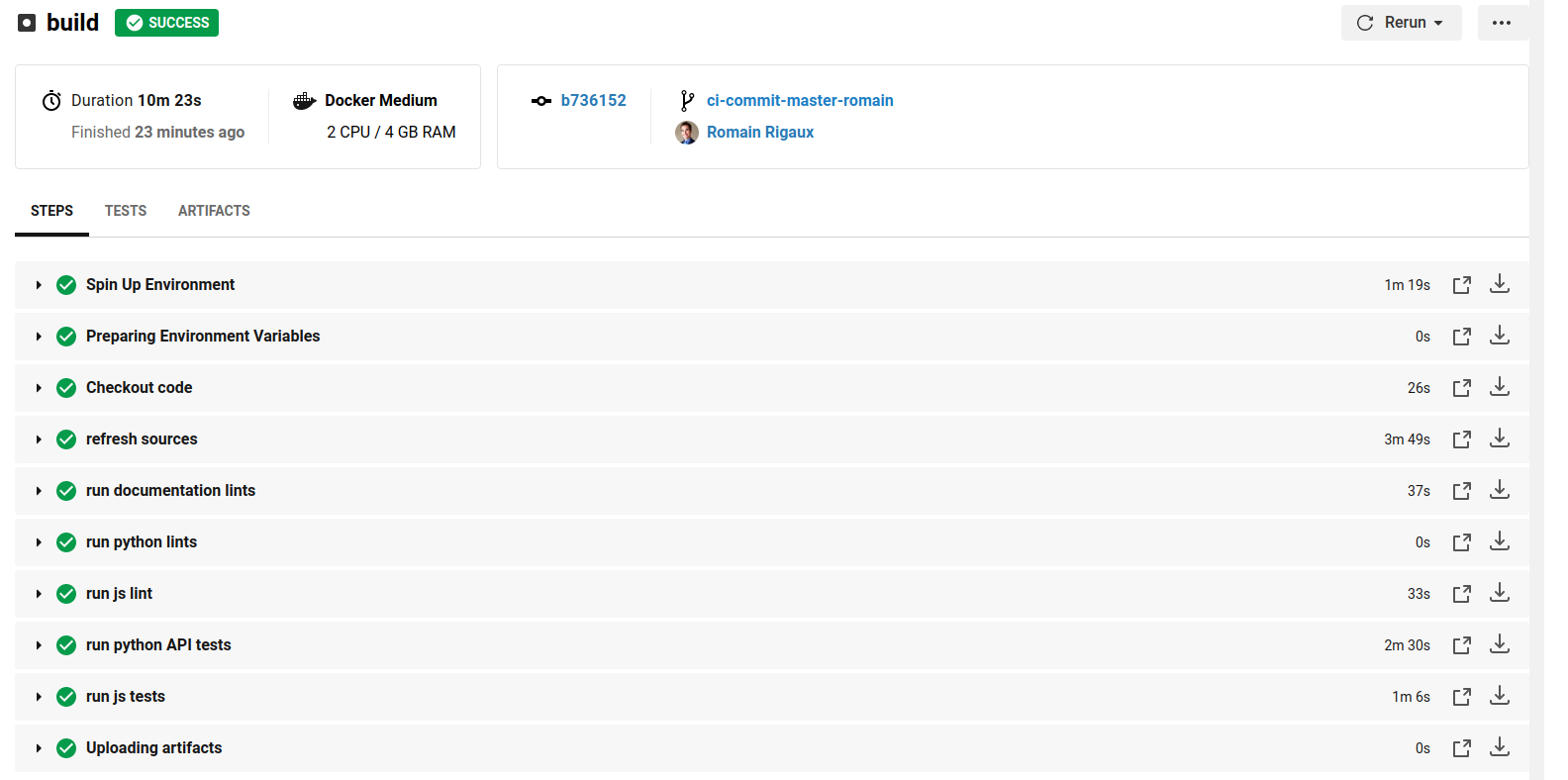

List of CI checks

List of CI checks

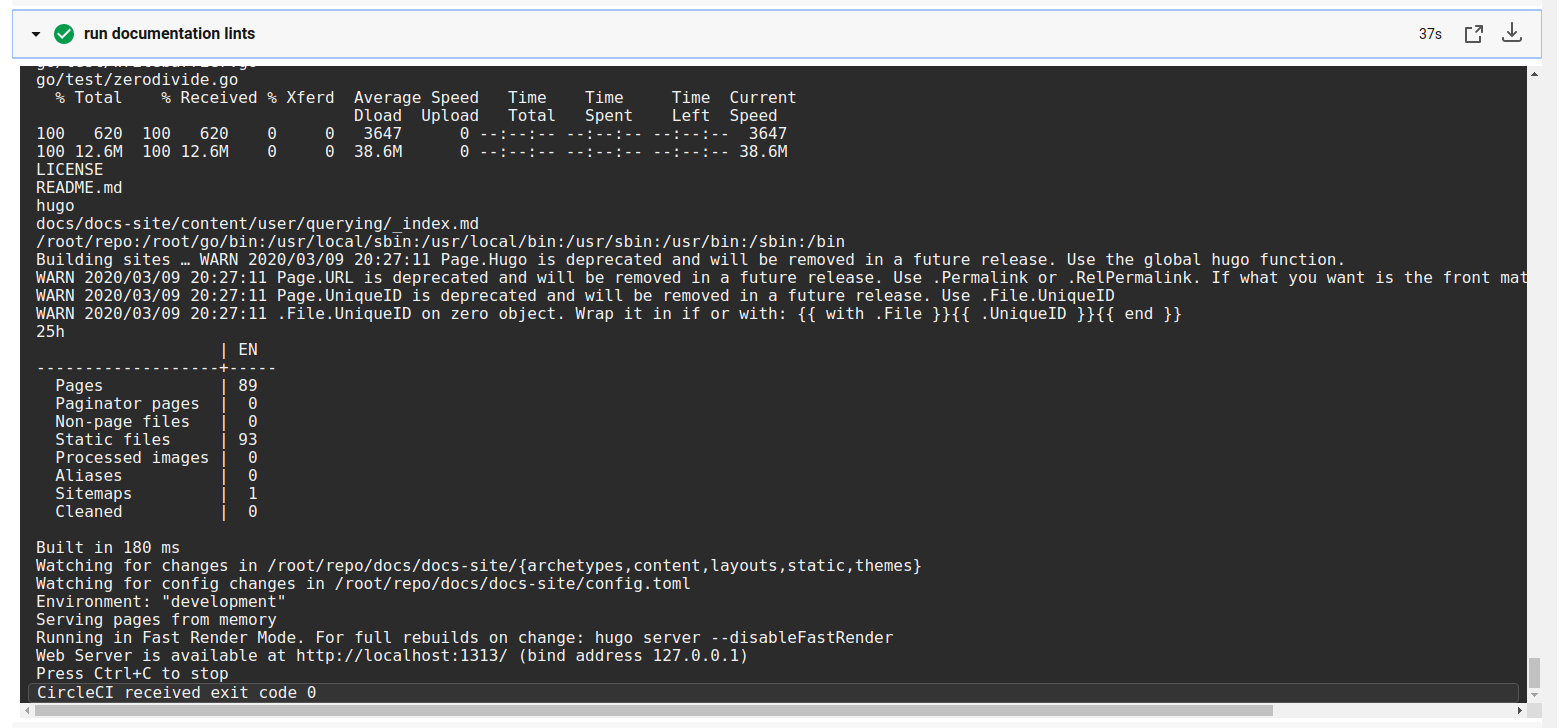

Next item on the list to automate was the automated checking of deadlinks of https://docs.gethue.com and https://gethue.com/ that was previously manual.

New website deadlinks check

New website deadlinks check

Overall the action script will:

- Check if the new commits containing a documentation of website change

- Then boot

hugoto locally serve the site - And run

muffetto crawl and checks the links

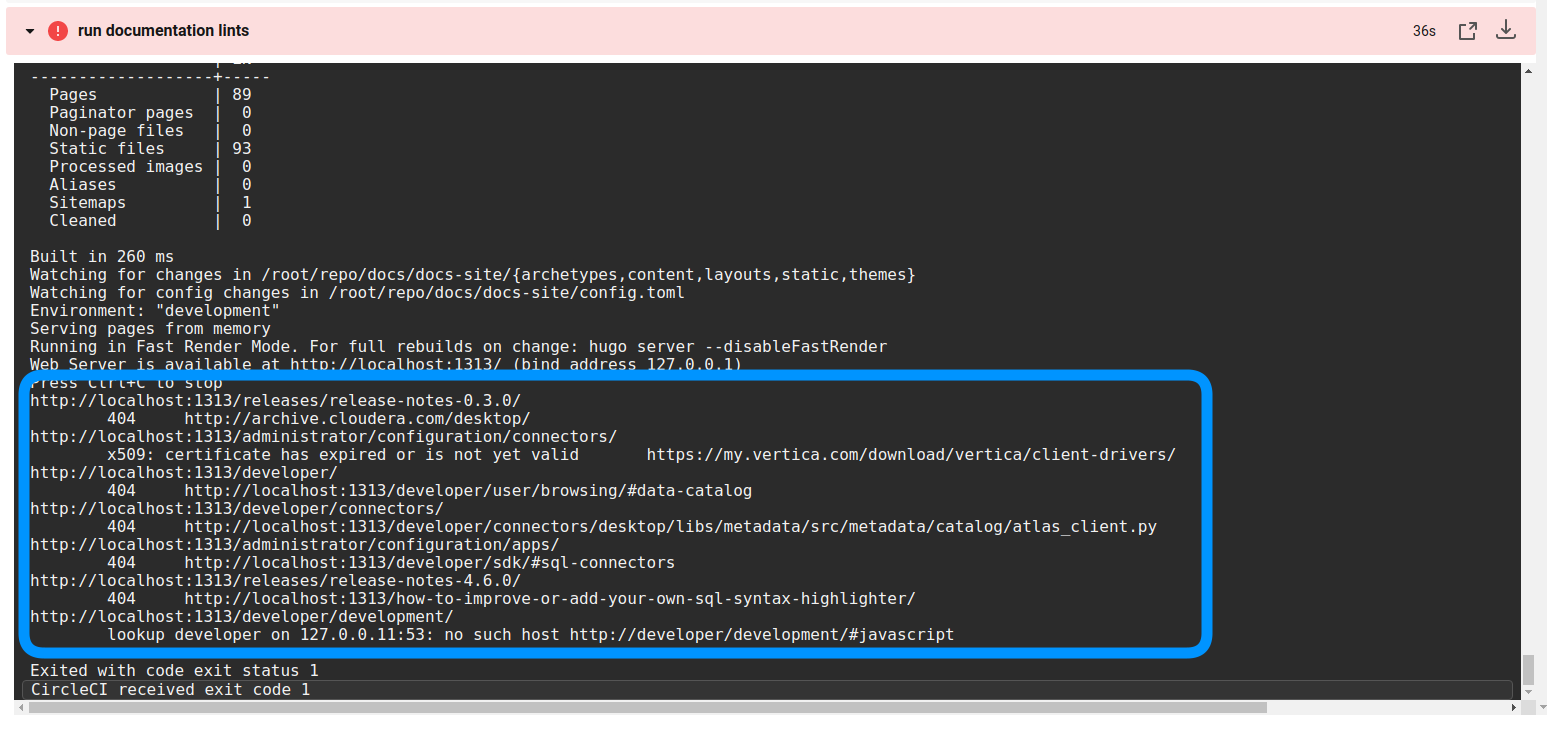

Link checking failure

Link checking failure

Note: it is handy to had some URL blacklist and lower number of concurrent crawler connections to avoid hammering some external websites (e.g. https://issues.cloudera.org/browse/HUE which contains a lot of references).

What is your favorite CI process? Any feedback? Feel free to comment here or on @gethue!