Last update Aug 30th 2018

Latest

A major improvement in 4.2 is IMPALA-1575, meaning that Impala queries not closed by Hue have their resources actually released after 10min (vs never until then). This is a major improvement when having many users. It is worth the upgraded even just for this one.

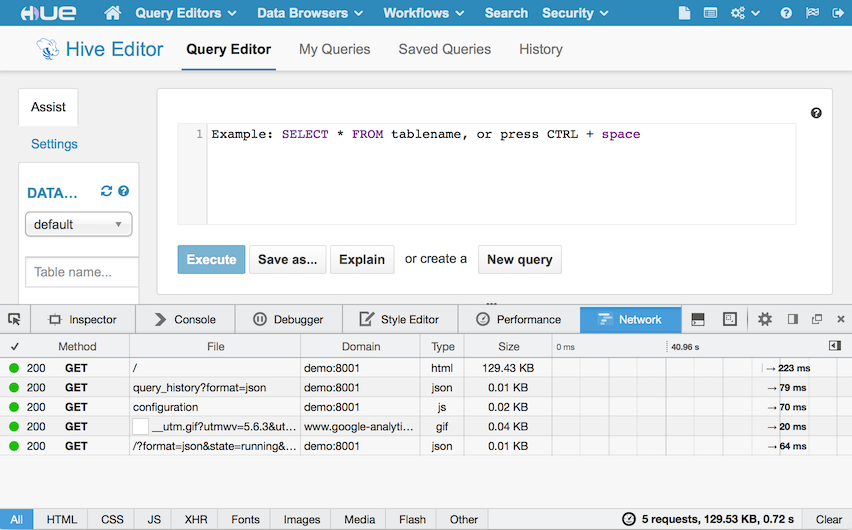

Hue in 4.2 got 500+ bug fixes. Hue also now comes with caching of SQL metadata throughout all the application, meaning the list of tables or a database or the column description of a table are only fetched once and re-used in the autocomplete, table browser, left and right panels etc.. The profiling of calls is simpler in 4.2 with a total time taken by each request automatically logged.

Typical setups range from 2 to 10 Hue servers, e.g. 10 Hue servers, 250 unique users / week, peaks at 125 users/hour with 300 queries

In practice ~25 users / Hue peak time is the rule of thumb. This is accounting for the worse case scenarios and it will go much higher with the upcoming http://gunicorn.org/ integration. Most of the scale issues are actually related to resource intensive operations like large download of query results or when an RPC call from Hue to a service is slow (e.g. submitting a query hangs), not by the number of users.

Here you can find a list of tips regarding some performance tuning of Hue:

General Performance

- Each Hue instance will support by default 50+ concurrent users by following this guide. Adding more Hue instances behind the load balancer will increase performances by 50 concurrent users. If not using Cloudera Manager, you can manually setup NGINX or Apache in front of Hue.

- Move the database from the default database across to another database backend such as MySql/Postgres/Oracle, which handles locking better than the default SQLite DB. Hue should not be run on SQLite in an environment with more than 1 concurrent user. Read more about

[using an External Database for Hue Using Cloudera Manager][6]

- There are some memory fragmentation issues in Python that manifest in Hue. Check the memory usage of Hue periodically. Browsing HDFS dir with many files, downloading a query result, copying a HDFS files are costly operations memory wise.

- Upgrade to later versions of Hue. There are significant performance gains available in every release.

Query Editor Performance

- Compare performance of the Hive Query Editor in Hue with the exact same query in a beeline shell against the exact same HiveServer2 instance that Hue is pointing to. This will determine if Hue needs to be investigated or HiveServer2 needs to be investigated.

- Check the logging configuration for HiveServer2 by going to Hive service Configuration in Cloudera Manager. Search for HiveServer2 Logging Threshold and make sure that it is not set to

DEBUG or TRACE. If it is, drop the logging level toINFOat a minimum. - Configure individual dedicated HiveServer2 instances for each Hue instance separate from HiveServer2 instances used by other 3rd party tools or clients, or configure Hue to point to multiple HS2 instances behind a Load Balancer.

- Tune the query timeouts for HiveServer2 (in

hive-site.xml) and Impala on the hue_safety_valve or hue.ini: Query Life Cycle - Downloading queries past a few thousands rows will lag and increase CPU/memory usage in Hue by a lot. It is for this we are truncating the results until further improvements.

Feel free to ask any questions about the architecture, usage of the server in the comments, @gethue or the hue-user list!