Hue provides an interface for Impala, the next generation SQL engine for Hadoop. In order to offer even more performances, Hue can distribute the query load across all of the Impala workers.

Tutorial

This tutorial demonstrates how to setup Hue to query multiple Impalads (Impala daemons):

- Configuring Hue 3.6 on one node in a 4 node RedHat 6 cluster to work with multiple Impalads.

- Load balance the connections to impalad using HAProxy 1.4, but any load balancer that persists connections should work.

Here is a quick video demonstrating how to communicate with multiple Impalads in Hue!

Configuring Hue

There are two ways to configure Hue to communicate with multiple Impalads.

Configuration via Cloudera Manager

-

From Cloudera Manager, click on “Clusters” in the menu and find your Hue service.

-

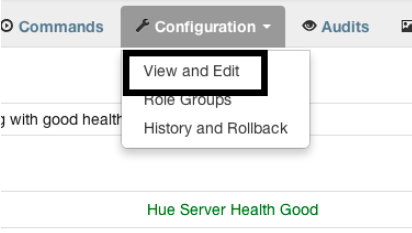

From the Hue service, go to “Configuration -> View and Edit”

-

We must provide a safety valve configuration in Cloudera Manager to use the appropriate load balancer and socket timeout. Go to “Service-Wide -> Advanced” and click on the value for “Hue Service Advanced Configuration Snippet (Safety Valve)”. You can use the following as a template for the value:

[impala] server_host=

server_port= server_conn_timeout=

For more information on configuring Hue via Cloudera Manager, see Managing Clusters.

Manual configuration

-

Open /etc/hue/hue.ini with your favorite text editor.

-

Change the config “server_conn_timeout” under the “impala” section to a large value (e.g. 1 hour). This value should be in seconds (e.g. 1 hour = 3600 seconds). See item #4 in “Configuration via Cloudera Manager” for information on configuration option.

-

Next, we must set the new host and port in the “impala” section in the hue.ini. The hostname is defined in “server_host” and the port is defined in “server_port”. See item #5 in “Configuration via Cloudera Manager” for an example configuration.

HA Proxy Installation/Configuration

- Download and unzip the binary distribution of HA Proxy 1.4 on the node that doesn’t have Hue installed.

- Add the following HA Proxy configuration to /etc/impala/haproxy-impala.conf:

global

daemon

nbproc 1

maxconn 100000

log /dev/log local6

defaults

log global

mode tcp

option tcplog

option tcpka

timeout connect 3600000ms

timeout client 3600000ms

timeout server 3600000ms

listen impala

bind 0.0.0.0:10001

balance leastconn

server impala1 server1.cloudera.com:21050 check

server impala2 server2.cloudera.com:21050 check

server impala3 server3.cloudera.com:21050 check

- Start HA Proxy:

haproxy -f /etc/impala/haproxy-impala.conf

The key configuration options are balance and server in the listen section. As well as the timeout configuration options in the defaults section. When the balance parameter is set to leastconn, Hue is guaranteed to create new connections with the impalad with the least number of connections. The server parameters define which servers will be used for load balancing and takes on the form:

server [:port] [settings ...]

In the configuration above, the server “impala1” is available at “impala1.cloudera.com:21050”, “impala2” is available at “impala2.cloudera.com:21050”, and “impala3” is available at “impala3.cloudera.com:21050”. The timeout configuration parameters define how long a TCP connection (on both sides) should live. In this example, the client timeout, server timeout, and connect timeout are all set at 1 hour.

HA Proxy is configured to bind to “0.0.0.0:10001”. Thus, Hue should now be able to point to HA Proxy, which will transparently pick one of the least utilized Impalads.

Conclusion

Load balancing Impalas’ queries will distribute the load to all the Impalads (where the final result aggregation happens for example). Impala currently requires non-volatile network connectivity by design so Hue can persist connections. We hope this helps you make the most of your Hadoop cluster!

Have any suggestions? Feel free to tell us what you think through hue-user or @gethue.