Interactive Spark in your Browser - Scala by the Bay

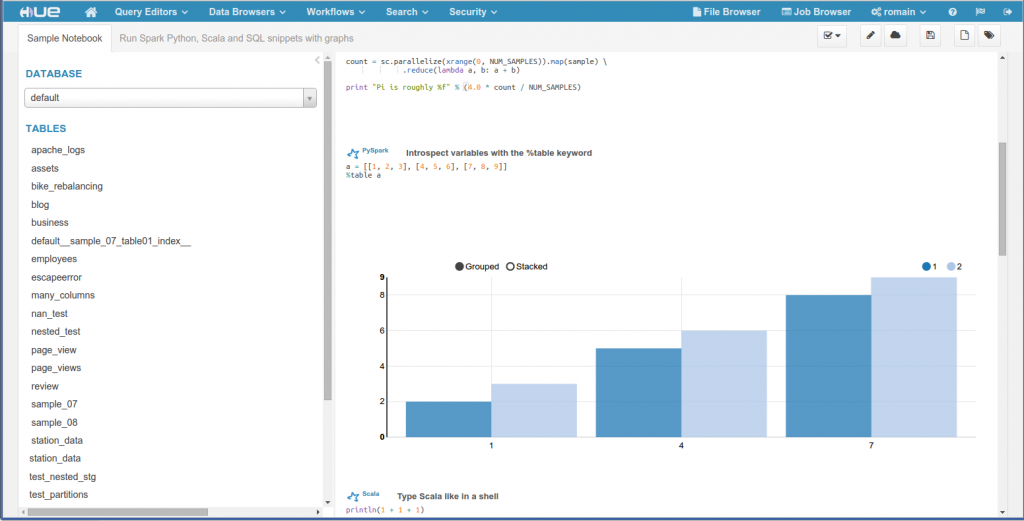

Supporting running Spark scripts directly from a browser would bring the user experience up. Indeed, everybody has a Web navigator, the command line can be avoided, built-in graphing and visualization make it easy to explore and understand data with just a few clicks. This also simplifies the administration as now everything becomes centralized in a service and is accessible by non native clients. For this purpose, an open source Spark Job Server was developed in order to provide Scala, SQL and Python in a Web shell. The main Hadoop components of the platform are also integrated in the same interface. This talk describes the architecture of the Spark Server and its main features: # Scala, Python, SQL submissions # Impersonation # Security # Job progress / canceling # YARN / HDFS / Hive integration The server also ships with a friendly user interface built as a Hue app. We will focus on explaining how they were built, how to use the API and which lessons were learned. The final end user interaction will be live demoed.